Scientists and Tech Leaders Warn of Artificial Intelligence Risks

January 13, 2015

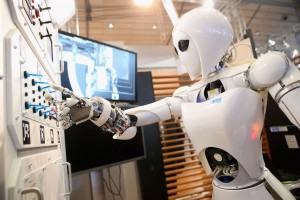

Will systems and robots featuring artificial intelligence (AI) help us to better live in our world or rule our world? Credit: © Sean Gallup, Getty Images/Thinkstock

On January 11 a number of prominent thinkers released an open letter urging society to consider the steadily increasing capabilities of artificial intelligence—and to control its potential risks. Artificial intelligence, or AI, is a broad field of computer research that aims to imitate the capacity human being have for intuition, problem-solving, and learning. The letter’s signers include Elon Musk—the South African entrepreneur who developed PayPal, Tesla Motors, and SpaceX—and Stephen Hawking, the British theoretical physicist who modernized our understanding of black holes.

The threat that artificial intelligence could conquer and oppress humanity has long been a staple of science fiction. Early science-fiction writers even came up with ways to decrease such a threat. For example, in the 1942 short story “Runaround” by American author Isaac Asimov, robots in a future world are programmed to follow laws designed to prevent them from injuring human beings, even if ordered to do so by humans. AI systems today, however, do not resemble the robots from science fiction.

The open letter, along with its attached research priorities document, gives an update on the current state of AI research. It notes that advanced AI systems are already widely distributed and safely used in many forms of technology, including Internet search engines, speech-recognition programs, and automobile systems. The letter also notes the many potential benefits from more advanced AI. It also disputes the notion that truly powerful AI is an impossible scheme, citing similarly incorrect predictions about the futures of nuclear energy and interplanetary travel.

Imminent threats from real-world AI could involve physical attacks from such “killer robots” as autonomous armed drones (pilotless aircraft). But AI threats also include economic disruption from labor automation, wherein workers are replaced by robotic machines and are unable to find other work. Or doctors relying on flawed AI systems to diagnose and treat people with diseases. The letter urges researchers, lawmakers, and technologists to work together to consider how to prevent such systems from causing harm. For example, new laws might require autonomous AI systems to include a human decision-maker “in the loop.”

World Book articles and links: